Uploading a Zip File to Facebook Group

EXPEDIA GROUP TECHNOLOGY — SOFTWARE

How to Upload Large Files to AWS S3

Using Amazon'due south CLI to reliably upload up to 5 terabytes

In a single operation, you can upload upwards to 5GB into an AWS S3 object. The size of an object in S3 can be from a minimum of 0 bytes to a maximum of 5 terabytes, then, if you are looking to upload an object larger than 5 gigabytes, y'all need to use either multipart upload or split the file into logical chunks of upwards to 5GB and upload them manually every bit regular uploads. I volition explore both options.

Multipart upload

Performing a multipart upload requires a process of splitting the file into smaller files, uploading them using the CLI, and verifying them. The file manipulations are demonstrated on a UNIX-like organisation.

- Earlier you upload a file using the multipart upload procedure, we need to calculate its base64 MD5 checksum value:

$ openssl md5 -binary examination.csv.gz| base64 a3VKS0RazAmJUCO8ST90pQ==

two. Split the file into pocket-sized files using the divide command:

Syntax

split [-b byte _ count[k|one thousand]] [-l line _ count] [file [proper noun]] Option -b Create smaller files byte_count bytes in length.

`m' = kilobyte pieces

`m' = megabyte pieces. -l Create smaller files line _ count lines in length.

Splitting the file into 4GB blocks:

$ split up -b 4096m test.csv.gz examination.csv.gz.part- $ ls -l test*

-rw-r--r--@ 1 user1 staff 7827069512 Aug 26 16:xx test.csv.gz

-rw-r--r-- 1 user1 staff 4294967296 Aug 26 16:36 test.csv.gz.function-aa

-rw-r--r-- i user1 staff 3532102216 Aug 26 16:36 exam.csv.gz.part-ab

3. Now, multipart upload should be initiated using the create-multipart-upload command. If the checksum that Amazon S3 calculates during the upload doesn't match the value that you entered, Amazon S3 won't store the object. Instead, you receive an error bulletin in response. This step generates an upload ID, which is used to upload each part of the file in the next steps:

$ aws s3api create-multipart-upload \

--bucket bucket1 \

--key temp/user1/test.csv.gz \

--metadata md5=a3VKS0RazAmJUCO8ST90pQ== \

--profile dev {

"AbortDate": "2020-09-03T00:00:00+00:00",

"AbortRuleId": "deleteAfter7Days",

"Bucket": "bucket1",

"Key": "temp/user1/exam.csv.gz",

"UploadId": "qk9UO8...HXc4ce.Vb"

}

Caption of the options:

--saucepan bucket name

--primal object proper noun (can include the path of the object if y'all want to upload to any specific path)

--metadata Base64 MD5 value generated in pace 1

--profile CLI credentials contour name, if you have multiple profiles

4. Next upload the first smaller file from step i using theupload-part control. This step will generate an ETag, which is used in after steps:

$ aws s3api upload-part \

--bucket bucket1 \

--central temp/user1/exam.csv.gz \

--part-number 1 \

--body test.csv.gz.part-aa \

--upload-id qk9UO8...HXc4ce.Vb \

--profile dev {

"ETag": "\"55acfb877ace294f978c5182cfe357a7\""

}

In which:

--part-number file part number

--body file name of the part being uploaded

--upload-id upload ID generated in step 3

5. Upload the second and final part using the same upload-part control with --part-number 2 and the 2d part's filename:

$ aws s3api upload-part \

--bucket bucket1 \

--cardinal temp/user1/test.csv.gz \

--part-number 2 \

--body examination.csv.gz.role-ab \

--upload-id qk9UO8...HXc4ce.Vb \

--profile dev {

"ETag": "\"931ec3e8903cb7d43f97f175cf75b53f\""

}

six. To make sure all the parts take been uploaded successfully, y'all can employ the list-parts control, which lists all the parts that have been uploaded so far:

$ aws s3api list-parts \

--saucepan bucket1 \

--key temp/user1/test.csv.gz \

--upload-id qk9UO8...HXc4ce.Vb \

--contour dev {

"Parts": [

{

"PartNumber": 1,

"LastModified": "2020-08-26T22:02:06+00:00",

"ETag": "\"55acfb877ace294f978c5182cfe357a7\"",

"Size": 4294967296

},

{

"PartNumber": 2,

"LastModified": "2020-08-26T22:23:13+00:00",

"ETag": "\"931ec3e8903cb7d43f97f175cf75b53f\"",

"Size": 3532102216

}

], "Initiator": {

"ID": "arn:aws:sts::575835809734:assumed-office/dev/user1",

"DisplayName": "dev/user1"

}, "Owner": {

"DisplayName": "aws-account-00183",

"ID": "6fe75e...e04936"

}, "StorageClass": "STANDARD"

}

7. Next, create a JSON file containing the ETags of all the parts:

$ cat partfiles.json

{

"Parts" : [

{

"PartNumber" : one,

"ETag" : "55acfb877ace294f978c5182cfe357a7"

},

{

"PartNumber" : 2,

"ETag" : "931ec3e8903cb7d43f97f175cf75b53f"

}

]

} eight. Finally, finish the upload process using the complete-multipart-upload command every bit below:

$ aws s3api consummate-multipart-upload \

--multipart-upload file://partfiles.json \

--bucket bucket1 \

--key temp/user1/test.csv.gz \

--upload-id qk9UO8...HXc4ce.Vb --profile dev {

"Expiration": "death-date=\"Fri, 27 Aug 2021 00:00:00 GMT\", rule-id=\"deleteafter365days\"",

"VersionId": "TsD.L4ywE3OXRoGUFBenX7YgmuR54tY5",

"Location":

"https://bucket1.s3.us-east-1.amazonaws.com/temp%2Fuser1%2Ftest.csv.gz",

"Saucepan": "bucket1",

"Key": "temp/user1/test.csv.gz",

"ETag": "\"af58d6683d424931c3fd1e3b6c13f99e-2\""

}

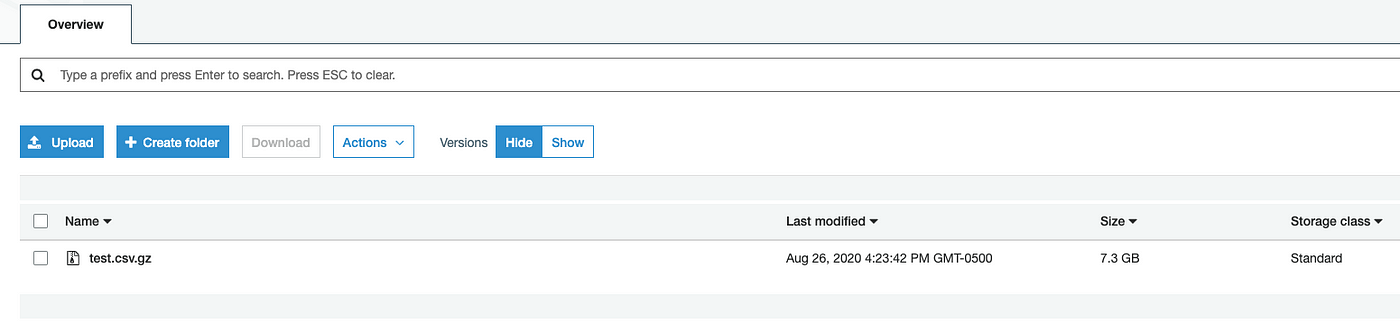

nine. Now our file object is uploaded into S3.

The following tabular array provides multipart upload core specifications. For more than information, run across Multipart upload overview.

Finally, Multipart upload is a useful utility to make the file i object in S3 instead of uploading it every bit multiple objects (each less than 5GB).

Split and upload

The multipart upload process requires yous to have special permissions, which is sometimes time-consuming to obtain in many organizations. You lot tin can split the file manually and do a regular upload of each part equally well.

Hither are the steps:

- Unzip the file if it is a zip file.

- Split the file based on the number of lines in each file. If it is a CSV file, you lot can use

parallel --headerto copy the header to each carve up file. I am splitting here subsequently every 2M records:

$ true cat test.csv \

| parallel --header : --pipe -N2000000 'cat >file_{#}.csv' 3. nothing the file back using gzip <filename> command and upload each file manually as a regular upload.

http://lifeatexpediagroup.com

romanfamenceromed.blogspot.com

Source: https://medium.com/expedia-group-tech/how-to-upload-large-files-to-aws-s3-200549da5ec1

0 Response to "Uploading a Zip File to Facebook Group"

Postar um comentário